How Sim-to-Real Transfer Works—Teaching Robots in Virtual Worlds

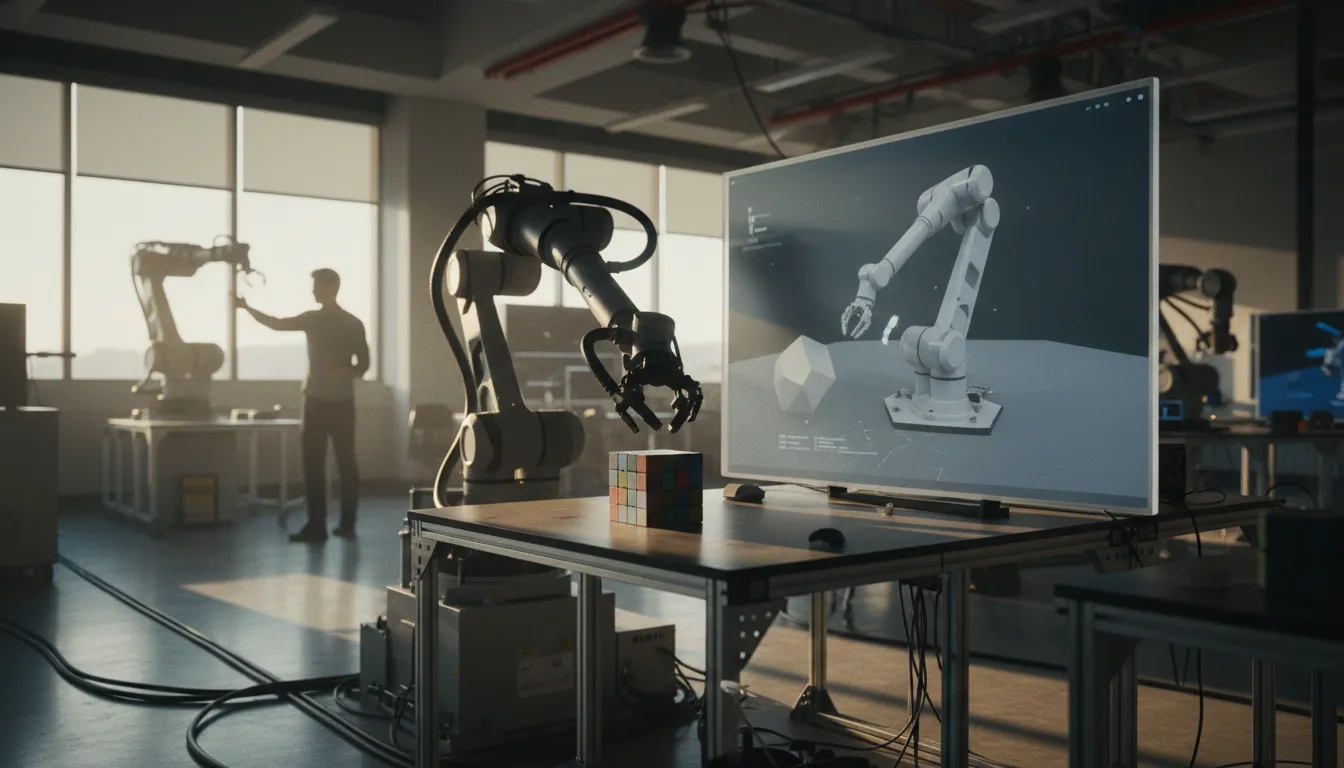

Sim-to-real transfer lets robots learn complex physical skills in virtual simulations before deploying them in the real world, bridging the 'reality gap' through techniques like domain randomization and digital twins.

The Problem: Real Robots, Real Costs

Training a robot to pick up an egg without crushing it, catch a ball, or navigate a crowded warehouse requires millions of attempts. In the physical world, each failed attempt risks broken hardware, wasted time, and potential safety hazards. A single robotic arm can cost tens of thousands of dollars, and trial-and-error learning could take years of continuous operation.

The solution is deceptively simple in concept: let robots make their mistakes in a virtual world first. This approach, known as sim-to-real transfer, has become the dominant method for teaching robots physical skills—and it is now powering everything from warehouse automation to a Sony robot that defeats professional table tennis players.

How Simulation Training Works

At its core, sim-to-real transfer uses physics simulators—software environments that model gravity, friction, collisions, and object dynamics. Inside these virtual worlds, a robot's digital twin can attempt a task millions of times in hours rather than years, learning through reinforcement learning: earning rewards for success and penalties for failure.

OpenAI's Dactyl project demonstrated this dramatically. A simulated robotic hand with 24 degrees of freedom learned to rotate a cube to specific orientations—a task requiring fine-grained coordination among five fingers. The system accumulated roughly 100 years of simulated experience, then transferred its skills to a physical Shadow Dexterous Hand that performed the task without ever having practiced on a real cube.

The Reality Gap

The central challenge of sim-to-real transfer is the reality gap—the mismatch between simulated and real-world physics. No simulator perfectly replicates how rubber grips a surface, how air resistance affects a spinning ball, or how a motor responds under load. A policy that works flawlessly in simulation can fail catastrophically on a physical robot.

Researchers have developed several techniques to close this gap:

- Domain randomization: The simulator deliberately varies physical parameters—friction coefficients, object masses, lighting conditions, motor delays—across thousands of training runs. By learning to succeed despite constant variation, the robot develops policies robust enough to handle real-world unpredictability.

- System identification: Engineers carefully measure and calibrate the simulator to match the real robot's exact physical properties, narrowing the gap through precision rather than randomization.

- Real-to-sim-to-real loops: MIT researchers developed methods where robots first gather small amounts of real-world data, use it to build more accurate digital twins, then train new policies in the improved simulation before returning to reality.

From Labs to Factories

Sim-to-real transfer has moved well beyond research demonstrations. NVIDIA's Isaac Sim platform lets manufacturers build virtual replicas of entire factory floors where robot fleets can train collaboratively before physical deployment. Agility Robotics uses simulation-trained policies for its Digit humanoid, already operating in Amazon fulfillment centers handling tote transfers autonomously.

Sony AI's Project Ace, published in Nature in April 2026, showcased the technique's sophistication. Its table tennis robot—trained through reinforcement learning in simulation—tracks a ball 200 times per second and reacts with 20-millisecond latency, roughly ten times faster than a human player. It defeated professional players in official matches, marking the first time an autonomous robot achieved expert-level performance in a competitive physical sport.

Why It Matters

The implications extend far beyond table tennis. As simulation fidelity improves and computing costs fall, sim-to-real transfer is accelerating a fundamental shift: robots that learn general physical intelligence rather than following rigid, pre-programmed routines. Industry analysts expect humanoid robot deployments to scale from hundreds in 2025 to thousands by 2027, with simulation-trained dexterity as a key enabler.

The approach also democratizes robotics development. Companies no longer need massive fleets of physical prototypes to develop capable robots—a powerful GPU cluster and a good simulator can accomplish what once required years of real-world experimentation. The virtual training ground is becoming the foundation on which the next generation of physical AI is built.