OpenAI Signs Pentagon Deal With Unprecedented AI Safeguards

OpenAI has struck a deal with the U.S. Department of Defense to deploy its AI models on classified networks, establishing three non-negotiable red lines — no mass surveillance, no autonomous weapons, and no high-stakes automated decisions without human oversight.

A Historic Agreement, Struck in Hours

On the evening of February 27, 2026, OpenAI CEO Sam Altman announced via social media that his company had "reached an agreement with the Department of War to deploy our models in their classified network." The deal, described by the company as carrying more guardrails than any previous classified AI deployment agreement, was signed within hours of one of the most dramatic episodes in the short history of military AI contracting.

The Anthropic Fallout That Set the Stage

The backdrop was explosive. Defense Secretary Pete Hegseth had earlier that Friday designated rival AI laboratory Anthropic a "supply chain risk to national security" — an extraordinary and legally contested move that effectively barred military contractors from doing business with the company. President Trump simultaneously ordered all federal agencies to immediately cease using Anthropic's services.

The dispute stemmed from Anthropic's refusal to let its AI be used without firm restrictions against mass domestic surveillance and fully autonomous weapons. The Pentagon had demanded acceptance of an "all lawful use" clause, giving the military broad discretion over how AI models could be deployed. Anthropic refused. OpenAI, within hours, stepped into the gap.

Anthropic called the supply chain designation "legally unsound" and vowed to challenge it in court, arguing that Hegseth lacks the legal authority to ban contractors from doing business with a private company.

Three Red Lines OpenAI Won't Cross

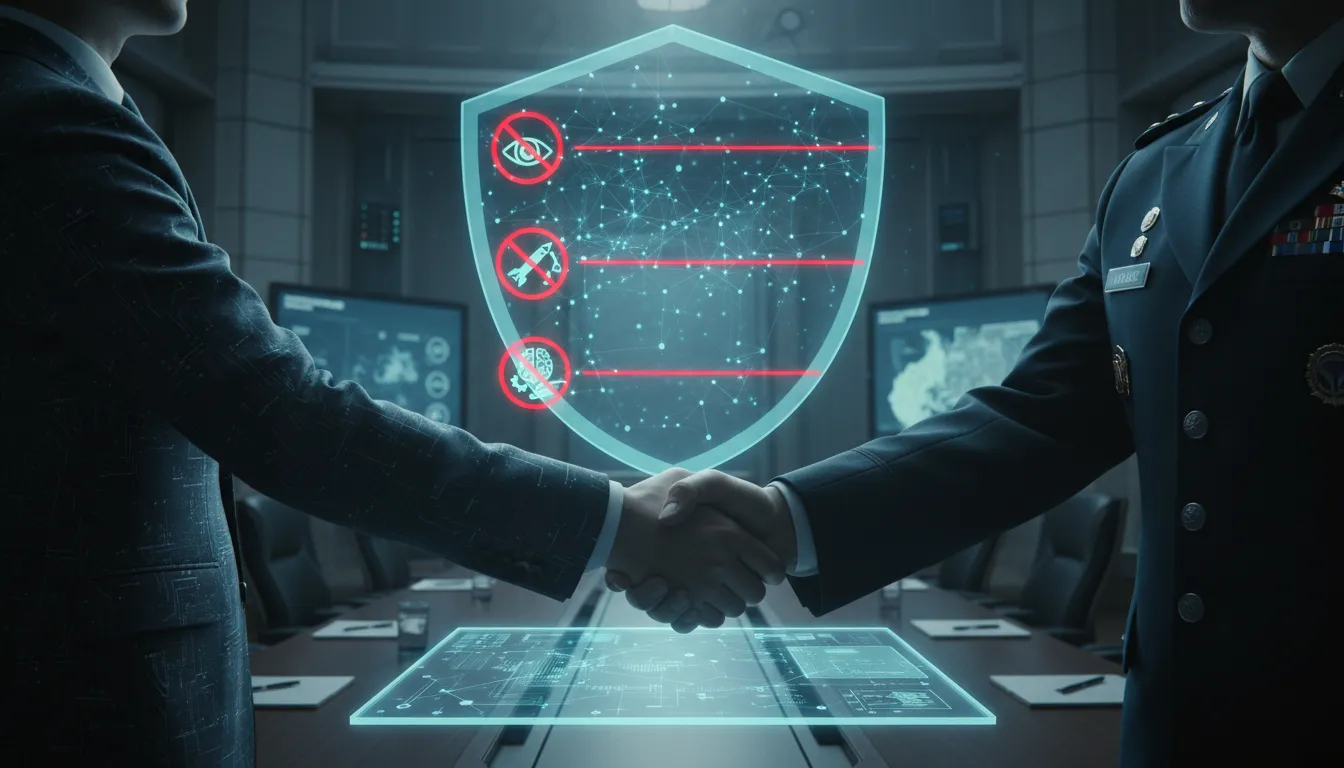

What distinguishes the OpenAI deal is the explicit codification of ethical limits. OpenAI published three hard restrictions — what it calls red lines — that are embedded in the contract itself and backed by technical enforcement:

- No mass domestic surveillance — OpenAI technology cannot be used to surveil American citizens at scale

- No autonomous weapons systems — AI models cannot be used to direct lethal autonomous weapons without human oversight

- No high-stakes automated decisions — systems akin to "social credit" scoring are explicitly prohibited

According to OpenAI, the Pentagon agreed to these principles, which are also reflected in existing U.S. law and policy. Crucially, OpenAI retains control over which models are deployed and how technical safeguards are implemented. Deployment is limited to cloud environments only — not edge devices — specifically to prevent the possibility of autonomous lethal use in the field.

A $200 Million Race for Military AI

The financial stakes are significant. The Pentagon has entered into agreements valued at up to $200 million each with multiple major AI laboratories, including OpenAI, in what amounts to a structured competition for dominance in military AI applications. Other companies, including xAI, have also accepted the Pentagon's "all lawful use" framework without the safeguards OpenAI negotiated.

The speed of the OpenAI deal — announced the same evening Anthropic was blacklisted — drew sharp attention from observers and OpenAI's own employees. A group of staff from both OpenAI and Google publicly demanded that their companies establish clear "red lines" before signing any defense contracts, warning against the normalization of AI in lethal decision-making.

A New Precedent — or a Compromise?

Altman framed the deal as a model for responsible AI in national security contexts, noting that the technical safeguards — including classifiers that OpenAI can run and update independently — give the company meaningful oversight even within a classified government environment.

Critics remain skeptical. The agreement places significant trust in contractual promises and proprietary technical controls, with limited external verification. But for now, the OpenAI-Pentagon deal sets a new benchmark: the first time an AI company has negotiated legally binding ethical constraints into a classified U.S. military AI deployment.