How Orbital Data Centers Work—and Why They Matter

As AI demand strains Earth's power grids, companies are racing to put data centers in orbit, where unlimited solar energy and natural cooling could transform cloud computing.

Computing Leaves the Ground

Data centers already consume roughly 1–2 percent of the world's electricity, and AI workloads are pushing that figure higher every year. Cooling alone can account for 40 percent of a facility's energy bill. Land, water, and grid capacity are all becoming scarce. A growing number of engineers and investors believe the solution is radical: move the servers off the planet entirely.

Orbital data centers (ODCs) are computing facilities designed to operate in low-Earth orbit, typically 500–600 km above the surface. They process data in space rather than beaming raw information down to ground stations, slashing bandwidth requirements and enabling near-real-time analysis of satellite imagery, climate data, and communications traffic.

How They Work

The basic architecture is straightforward. Satellites and sensors collect raw data—images, telemetry, signals intelligence—and relay it via optical link to a nearby orbital compute node. That node runs AI inferencing, filters images, detects features, or compresses files before sending only the most valuable results to Earth. If connectivity drops, the node buffers data, makes autonomous decisions, and triggers alerts on its own.

Power comes from solar panels. In a dawn-dusk sun-synchronous orbit, a spacecraft rides the boundary between day and night on Earth, keeping its panels in near-constant sunlight. Solar irradiance in orbit is roughly 36 percent higher than on the ground, with no clouds, no nighttime interruptions, and no atmospheric losses.

Cooling exploits the vacuum of space. Large radiator panels dump waste heat directly into the cosmos through passive radiative cooling—no water-hungry chillers required. A one-square-meter black plate at 20 °C radiates about 838 watts to deep space from both sides, roughly three times the electricity a same-sized solar panel generates.

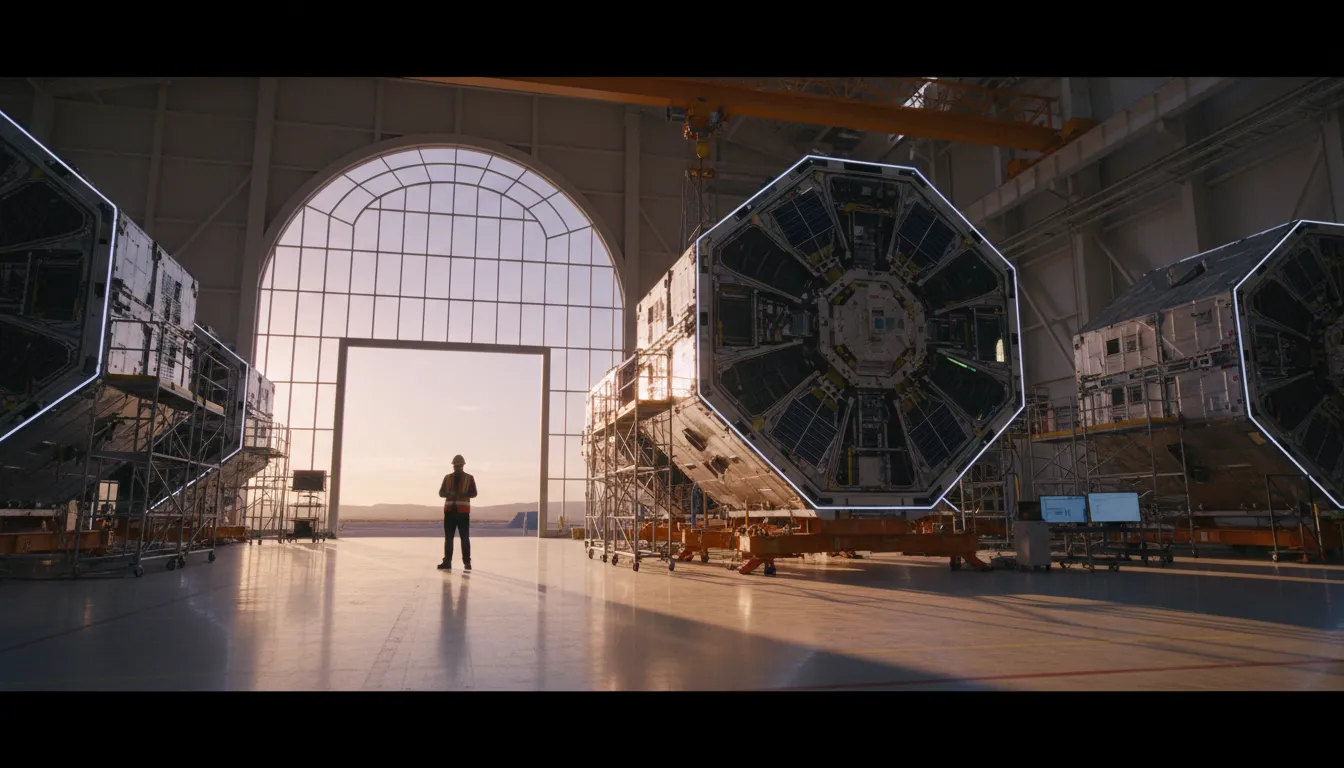

Who Is Building Them

Several companies are racing to make the concept real. Starcloud (formerly Lumen Orbit), a Redmond-based startup backed by Y Combinator, launched its first satellite carrying an Nvidia H100 GPU in late 2025—100 times more powerful than any processor previously operated in space. The company envisions megawatt-scale server clusters powered by solar arrays stretching up to four kilometers wide.

Axiom Space is developing orbital infrastructure under NASA's Commercial LEO Development Program, with plans to dock its first module to the International Space Station. Planet Labs, already operating hundreds of Earth-observation satellites, is integrating edge AI to process imagery in orbit rather than on the ground.

In March 2026, Nvidia announced its Space-1 Vera Rubin Module at GTC, delivering up to 25 times more AI compute than the H100 for space-based inferencing. Partners include Axiom, Starcloud, and several other space operators.

The Advantages

Proponents cite several benefits beyond clean energy and passive cooling:

- No land-use conflicts. Space has no property taxes, zoning restrictions, or neighbors objecting to construction.

- Lower carbon footprint. Starcloud estimates a solar-powered ODC could achieve ten times lower carbon emissions than a natural-gas-powered terrestrial facility.

- Edge processing. Analyzing satellite data in orbit eliminates the bottleneck of downlinking terabytes to ground stations.

- Physical security. Facilities hundreds of kilometers above Earth are inherently difficult to access or attack.

The Challenges

Skeptics raise serious obstacles. Launch costs remain the largest barrier: every kilogram of hardware must ride a rocket. Radiation in orbit degrades electronics, requiring shielding or radiation-hardened chips that must be replaced every five to six years. Latency between orbit and Earth—while acceptable for batch processing—rules out applications that need single-digit-millisecond response times.

Space debris poses an existential risk. More hardware in orbit increases the chance of collisions, potentially triggering a runaway cascade known as Kessler syndrome. And while solar power is abundant, building and deploying kilometers-wide panel arrays remains an engineering challenge that has never been attempted at commercial scale.

What Comes Next

The first two operational ODC nodes reached low-Earth orbit in January 2026, proving the concept is no longer theoretical. As launch costs continue to fall—driven largely by reusable rockets—and AI demand continues to rise, the economic case for orbital computing strengthens. Whether space-based data centers become a mainstream pillar of cloud infrastructure or remain a niche for satellite operators, they represent one of the most ambitious answers yet to the question of how to power the AI era without exhausting the planet.